The Ethics Trap

Most candidates suggest "adding a disclaimer" or "filtering keywords." Stop. These are "Surface-Level" fixes that users easily bypass. Ethical AI requires Structural Guardrails built into the model's training and the product's inference layer.

The Core Framework: The "3-G" Safety Model

1. Grounding (The "Truth" Layer)

Ensure the AI isn't just "dreaming"; it must be anchored in verified data.

- The Strategy: Use RAG (Retrieval-Augmented Generation) to restrict the AI's "Creative License."

- The Soundbite: "I don't just 'Hope' the AI is right. I ground the output in our proprietary knowledge base. If the AI can't find a source for a claim, it is programmed to say 'I don't know' rather than hallucinating a plausible lie."

2. Guardrails (The "Inference" Layer)

Implement real-time monitoring of inputs and outputs.

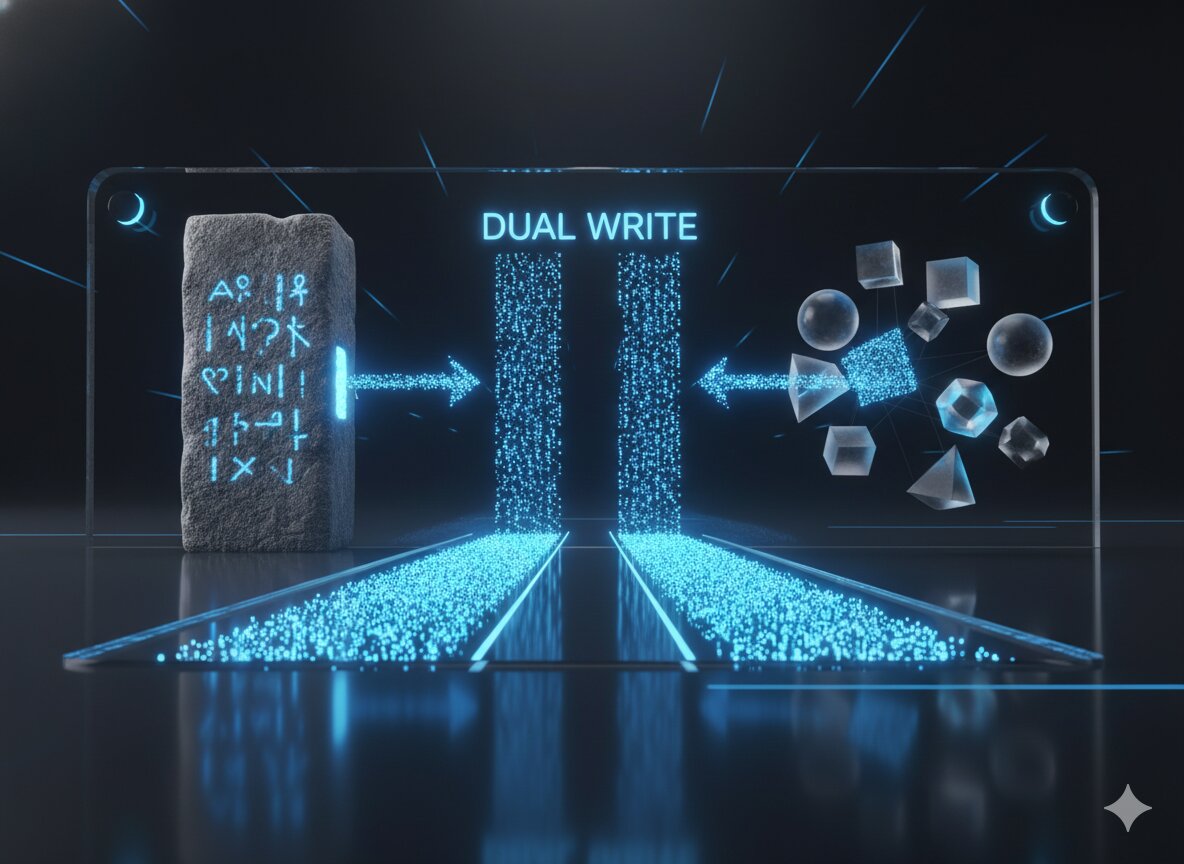

- The Tactics: Use a "Moderation Model" to shadow the main LLM.

- The Soundbite: "We use a 'Dual-Model' architecture. Before an output hits the user, a smaller, highly-tuned 'Safety Model' audits it for bias, toxicity, or PII (Personally Identifiable Information). If it fails, the user gets a pre-defined safe response."

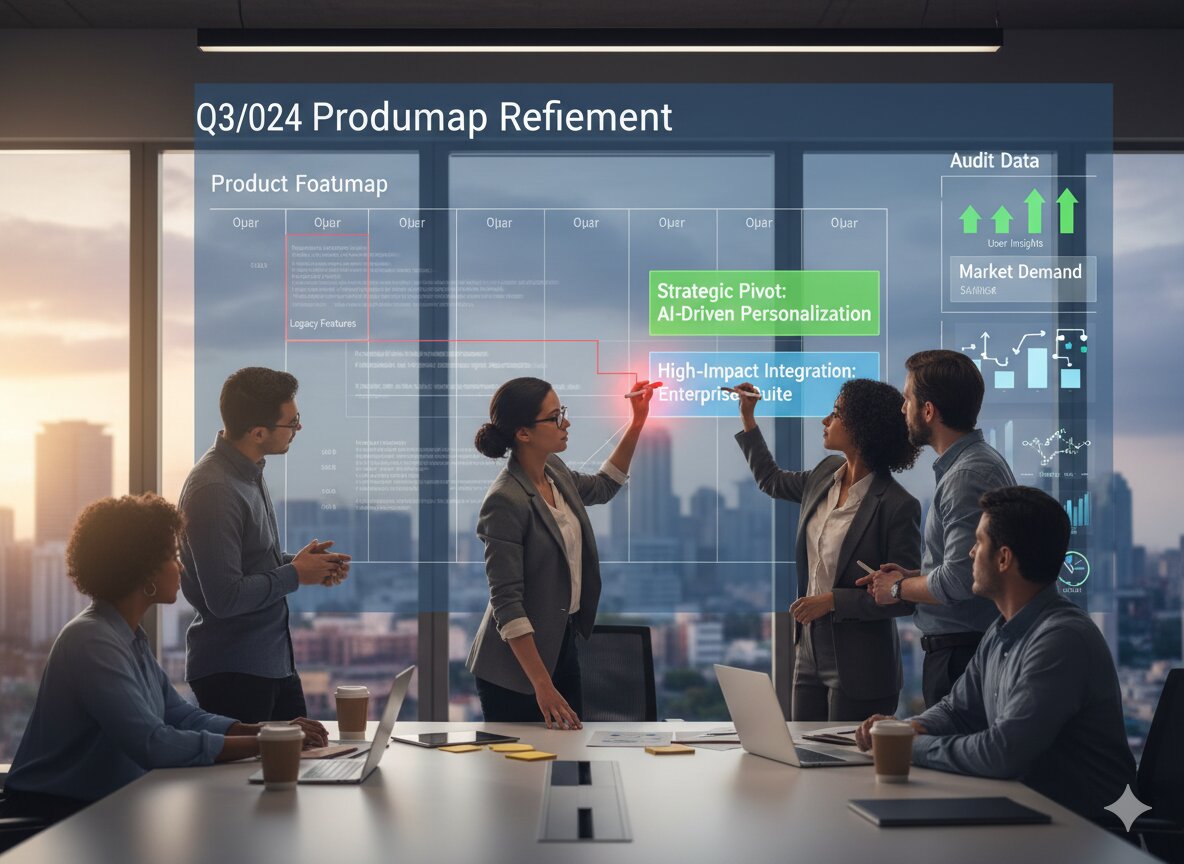

3. Governance (The "Feedback" Layer)

Who decides what is "Safe"? This must be a transparent, repeatable process.

- The Tactics: Conduct Adversarial "Red-Teaming" sessions.

- The Soundbite: "Safety isn't static. We employ professional 'Red-Teams' to try and 'jailbreak' our model before every major release. We then feed those failure cases back into our RLHF (Reinforcement Learning from Human Feedback) pipeline to continuously harden the system."

The "Move Fast" PM (Risky)The "Ethical" PM (Resilient)Views Safety as a "Launch Blocker."Views Safety as a Product Trust Moat.Relies on basic keyword filters.Uses Adversarial Testing and RAG.Fixes bias after a PR crisis.Audits for bias during the training phase.

Lead with Responsibility

Ethical AI is the ultimate test of a PM’s Moral Compass and a TPM’s Technical Rigor. You need to prove you can protect the user without making the product "boring" or "useless."

Our kits provide "AI Ethics Audit Checklists" and "Risk Mitigation Frameworks" used by safety teams at the world's leading AI labs.

- For PMs: Design safe, trustworthy AI experiences with the PM Prep Guide.

- For TPMs: Build robust safety pipelines and monitoring with the TPM Prep Kit.

FAQs

Q: Does "Safety" hurt the product's performance?

A: Sometimes. Adding safety filters can increase Latency. Your job is to optimize the "Safety Stack" so that the delay is imperceptible to the user.

Q: How do we handle "Subjective" Bias?

A: Be transparent about your Model's Alignment. Provide "System Prompts" that tell the user exactly what the AI’s instructions are. Trust comes from transparency, not perfection.

Q: What is "Human-in-the-Loop"?

A: For high-stakes decisions (Health, Finance, Legal), the AI should never be the final word. It should provide a "Draft" for a human expert to review.

.png)

.png)

.png)

.jpg)

.jpg)