The Interview Trap:

The "Magic Box" Fallacy

The interviewer asks: "We want to add a smart assistant to our customer support portal using an LLM. How do you design and launch this feature?" Most candidates treat the AI like a magic box: "I'd connect the user's prompt to GPT-4 and show the answer." Stop. In 2026, anyone can call an API. A Senior PM or TPM is expected to solve for Hallucinations, Data Privacy, and Cost. If you don't mention Retrieval-Augmented Generation (RAG) or Evaluation Rubrics, you aren't building a product—you're building a prototype.

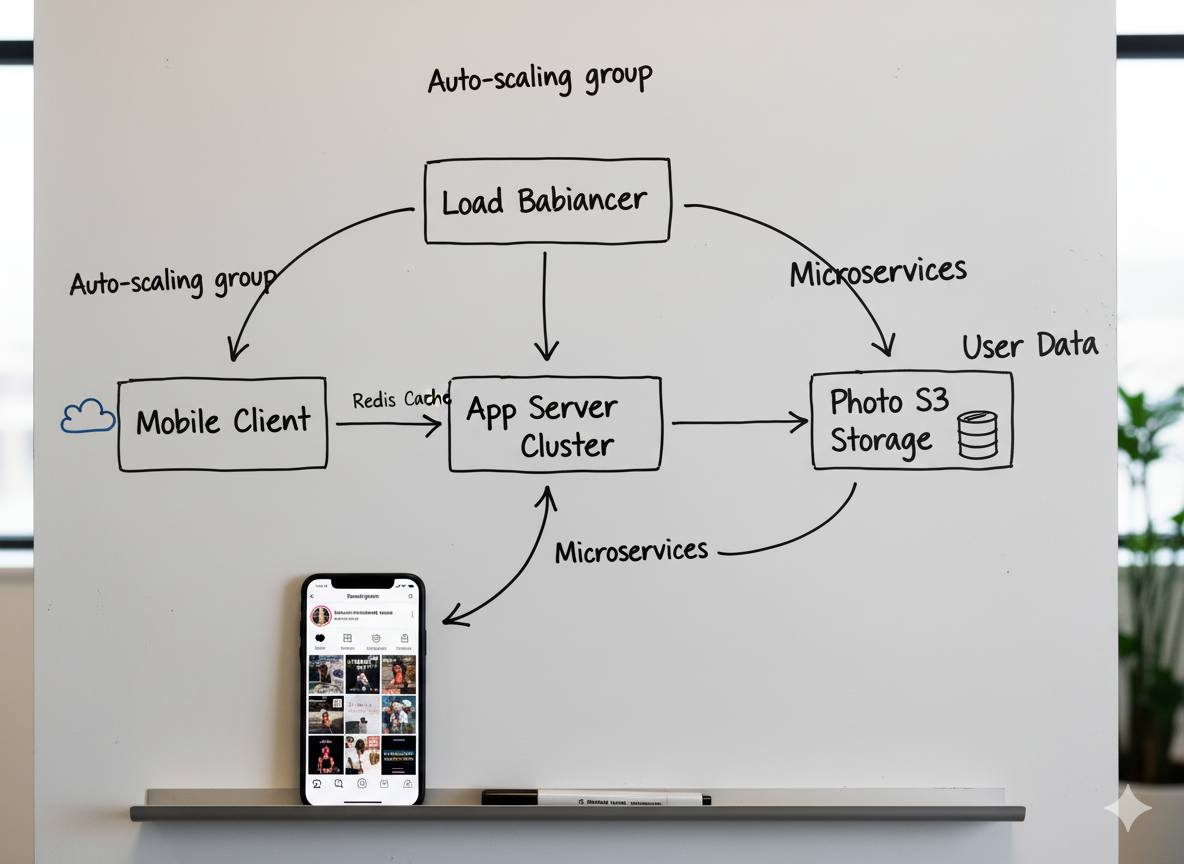

The Core Framework: The "RAG-EVAL" Method

To move from a "chatbot" to an "Enterprise AI Feature," you must focus on the infrastructure behind the prompt.

1. R-etrieval Strategy (Grounding the AI)

An LLM is only as good as the context you give it.

- The Strategy: Use RAG to feed the model your specific company data (KB articles, docs) so it doesn't "hallucinate" fake policies.

- The Soundbite: "I wouldn't rely on the model's pre-trained knowledge. I’d implement a RAG pipeline. When a user asks a question, we first perform a 'Semantic Search' in our Vector Database to find the most relevant support articles, then pass those as context to the LLM to ensure the answer is grounded in our actual policies."

2. A-ccuracy & Guardrails

How do you stop the AI from giving medical advice or swearing at customers?

- The Strategy: Define System Prompts and Output Filters.

- The Soundbite: "I’ll define a strict System Prompt that sets the persona and boundaries. I’d also implement a 'Guardrail Layer' (like LlamaGuard) that checks both the input and output for PII (Personally Identifiable Information) or toxic content before the user ever sees it."

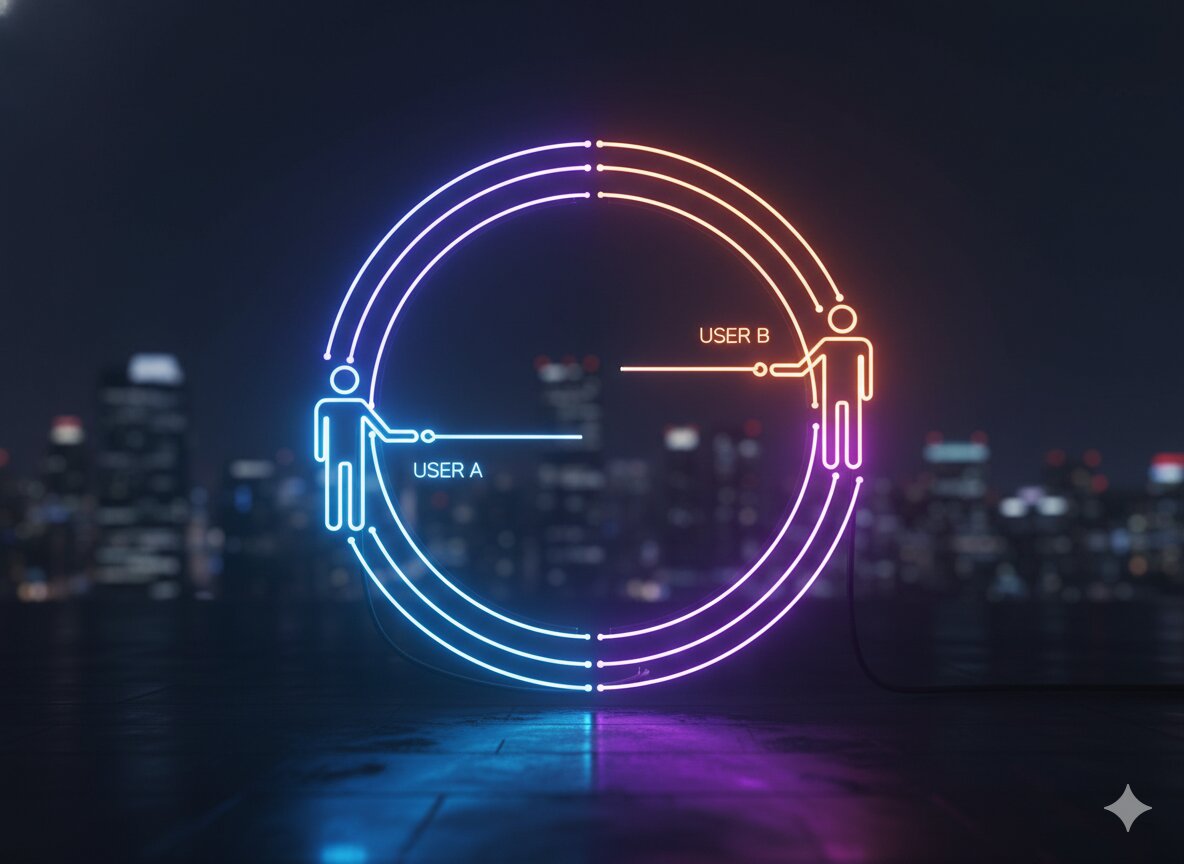

3. G-ranular Data Privacy

In the age of AI, data leakage is the #1 risk.

- The Strategy: Implement Tenant-Level Isolation.

- The Soundbite: "We must ensure that User A’s private data never ends up in the context provided for User B’s query. I’ll work with the TPMs to ensure our Vector Store has strict metadata filtering, so the retrieval step is restricted to the specific user's authorized data scope."

4. EVAL-uation & Benchmarking

How do you know if the AI is actually getting better?

- The Strategy: Create a Golden Dataset and use LLM-as-a-Judge.

- The Soundbite: "You can't A/B test your way to quality with non-deterministic models. I’ll create a 'Golden Dataset' of 100 benchmark questions with 'Ground Truth' answers. For every new model version or prompt tweak, we’ll run an automated eval using a stronger model (like GPT-4o) to grade the responses on Factuality, Tone, and Completeness."

5. L-atency & Unit Economics

AI is slow and expensive. How do you scale it?

- The Strategy: Use Semantic Caching and Model Distillation.

- The Soundbite: "To manage costs, I’ll implement 'Semantic Caching.' If a user asks a question similar to one asked 5 minutes ago, we serve the cached result instead of hitting the LLM. I’d also explore 'Small Language Models' (SLMs) for simpler tasks like 'Classification' to save on token costs and reduce latency."

The "AI Enthusiast" (Junior)The "RAG-EVAL" Leader (Senior)Focuses on "Cool" prompts.Focuses on Data Quality and Retrieval.Thinks the model is always right.Assumes the model will Hallucinate and builds filters.Manually tests a few queries.Builds Automated Eval Pipelines.

Lead the AI Revolution

AI-First thinking is no longer a niche; it is the core requirement for tech leadership in 2026. Whether you are a PM defining the UX or a TPM managing the inference infrastructure, you need to understand the "Stack."

The Kracd Prep Kits are updated weekly with the latest frameworks for LLM Ops, RAG Architecture, and AI Ethics.

- For PMs: Design intuitive AI experiences with the PM Prep Guide.

- For TPMs: Manage the complexity of AI production systems with the TPM Prep Kit.

FAQs

Q: What is "Hallucination" and can it be fixed?

A: You can't "fix" it 100%, but you can Mitigate it. Using RAG to provide "Source Citations" allows the user to verify the AI's claims, which builds trust even when the model is imperfect.

Q: Should we build our own model or use an API?

A: Start with an API. It allows for faster iteration. Only move to "Fine-Tuning" or "Self-Hosting" when you have a massive amount of proprietary data and a clear need to lower token costs or improve latency beyond what an API can offer.

Q: How do we handle "Prompt Injection"?

A: Treat LLM inputs like SQL queries—never trust them. We use a "Dual-LLM" approach where a smaller, faster model scans the user's prompt for malicious instructions before passing it to the main generation model.

.png)

.png)

.png)

.jpg)

.jpg)