The AI Trap

Most candidates focus on the Model (e.g., "We’ll use GPT-5 or Gemini 3"). Stop. Models are commodities. The value in AI-First products comes from the Proprietary Context and the User Workflow. If your AI feature can be replaced by a simple ChatGPT prompt, you don't have a product; you have a wrapper.

The Core Framework: The "3-Layer" AI Stack

1. Data Moats (The Intelligence Layer)

AI is only as good as the data it trains on.

- The Strategy: Identify "Feedback Loops."

- The Soundbite: "I don't just add a Generative AI feature; I build a 'Data Flywheel.' Every time a user corrects the AI’s output, that correction is fed back into our fine-tuning pipeline. Our moat isn't the model; it's the 1 million human corrections that only we own."

2. Invisible AI (The Utility Layer)

The best AI is the one the user never sees. It should reduce "Cognitive Load," not add to it.

- The Strategy: Shift from "Generative" to "Agentic."

- The Soundbite: "I prioritize 'Anticipatory Design.' Instead of a chat box where the user has to type a prompt, our AI analyzes the user's current task and proactively suggests the next three steps. We are moving from 'Ask me anything' to 'I've done this for you'."

3. Trust & Safety (The Reliability Layer)

In 2026, "Hallucination Management" is a product requirement.

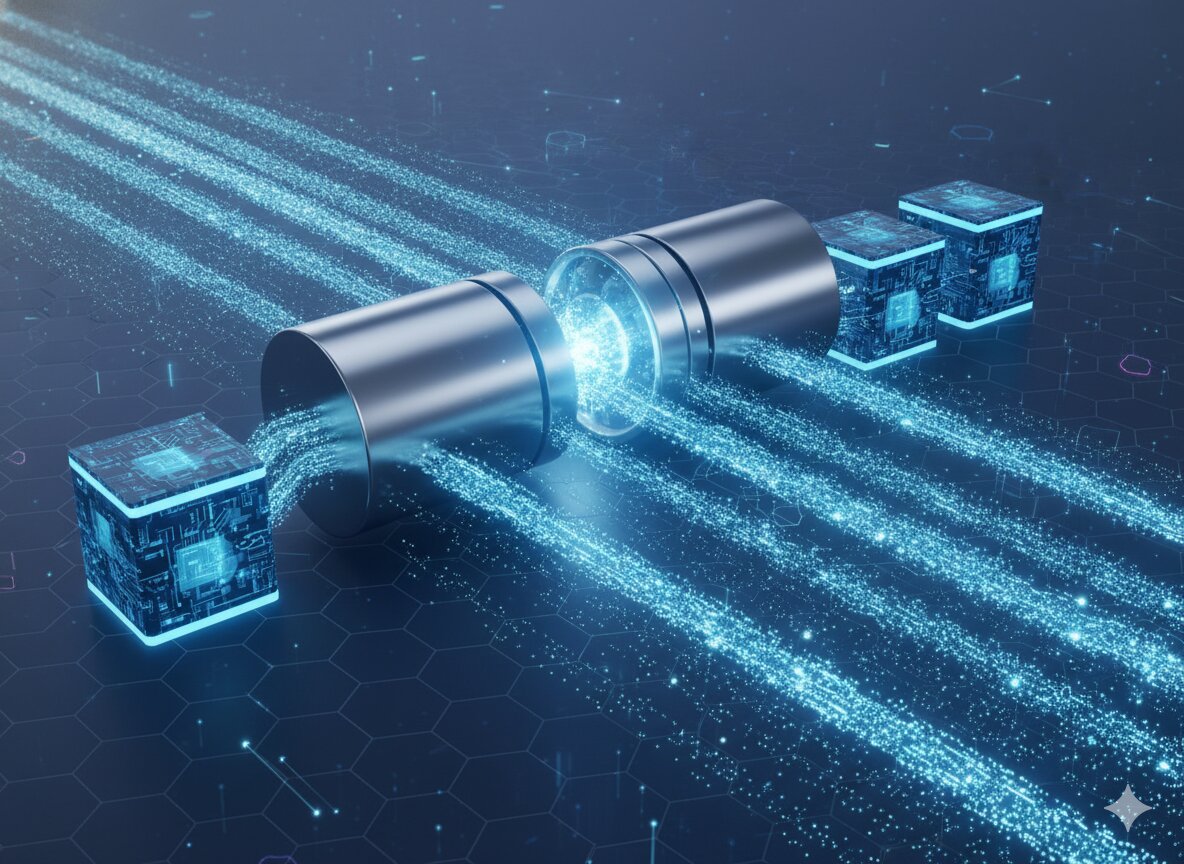

- The Tactics: Use RAG (Retrieval-Augmented Generation) to ground the AI in facts.

- The Soundbite: "We handle the 'Confidence Gap' by providing citations for every AI claim. If the model's confidence score is below 85%, we don't show the result; we route it to a human-in-the-loop. Reliability is our core feature."

The "AI-Added" PMThe "AI-First" PMAdds a Chatbot to the existing UI.Re-imagines the UI as a Dynamic Interface.Measures "Tokens Used."Measures "Time Saved" and "Task Success Rate."Thinks AI is a "Technical Feature."Thinks AI is a New Interaction Paradigm.

Lead the Intelligent Revolution

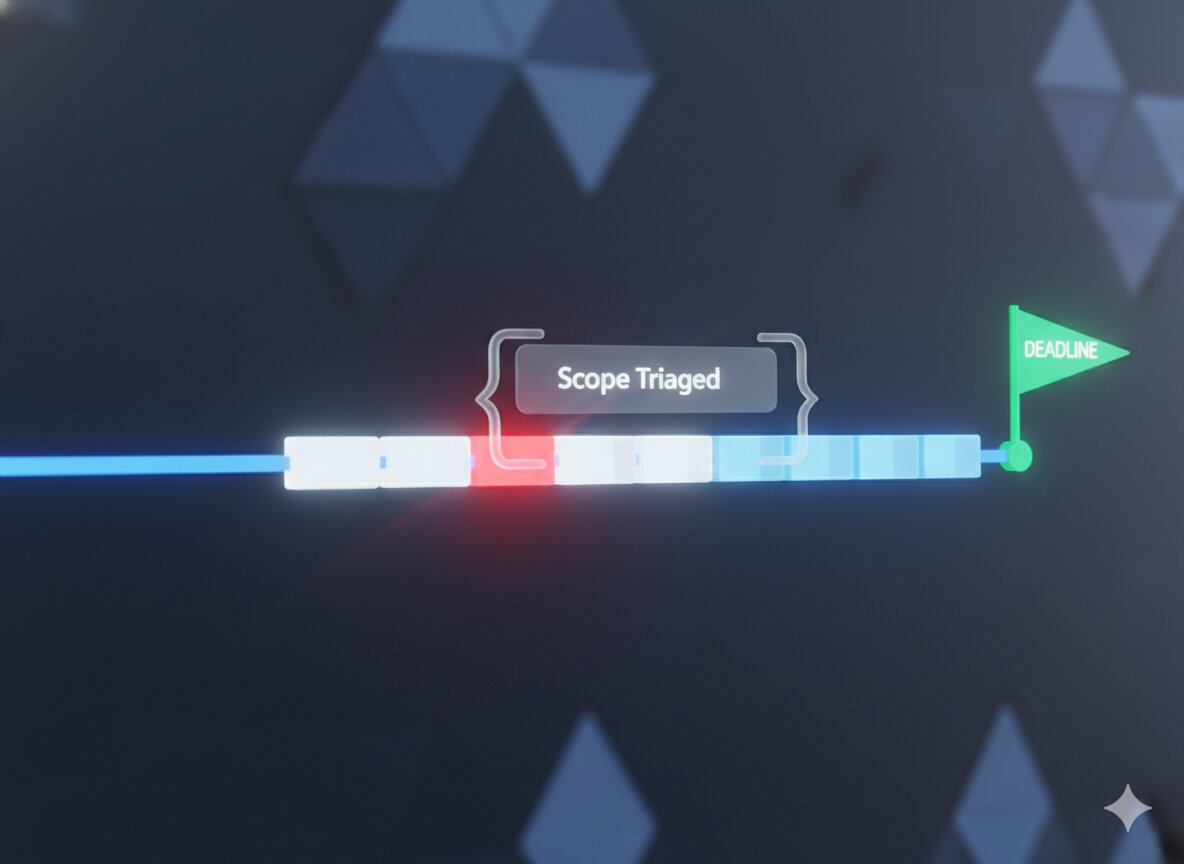

AI-First thinking requires a shift in how you manage engineering. You aren't just shipping "Code"; you are shipping "Probabilities." You need to prove you can manage the uncertainty of non-deterministic systems.

Our kits give you the "AI Product Specs" and "Evaluation Rubrics" used by AI teams at OpenAI, Anthropic, and Google.

- For PMs: Design intuitive AI experiences with the PM Prep Guide.

- For TPMs: Architect scalable RAG pipelines and model infra with the TPM Prep Kit.

FAQs

Q: Should we build our own model or use an API?

A: In 2026, 95% of products should use SOTA APIs (like Gemini or GPT) and focus their engineering on RAG and Fine-tuning. Unless you are a foundation model company, "Building your own" is usually a waste of capital.

Q: How do we measure AI success?

A: Move past "Accuracy." Measure "Alignment" (Did the AI do what the user actually wanted?) and "Human-Efficiency" (How many manual steps were removed?).

Q: What about "AI Ethics"?

A: It's not just a legal check; it's a brand promise. Audit for bias in your training data and provide clear "Opt-out" transparency for user data usage.

.png)

.png)

.png)

.jpg)

.jpg)